NASA LARC

As of January 2021, I have completed four internships at NASA Langley Research Center in the Office of the Chief Information Officer. Over the course of these internships, my team and I developed unique solutions in augmented and virtual reality (AR/VR) that emphasize the strengths of the virtual toolset while interacting with a physical environment.

Shackleton Crater

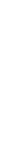

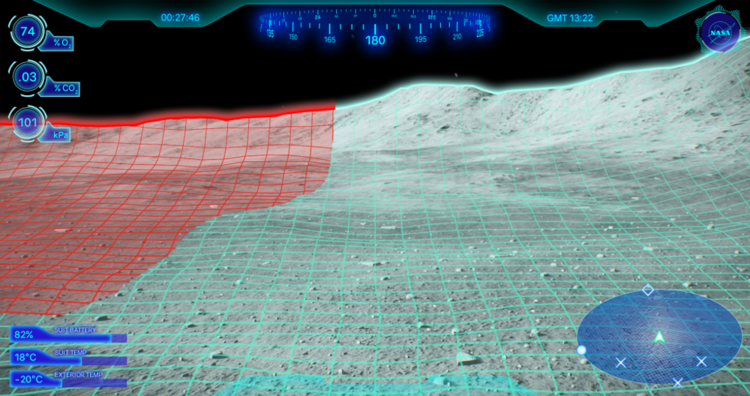

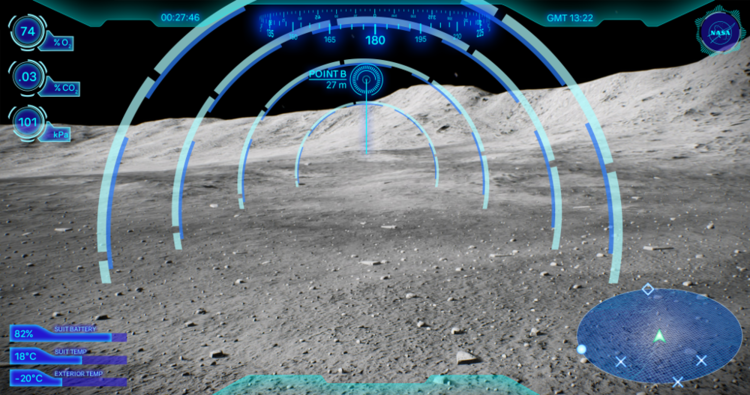

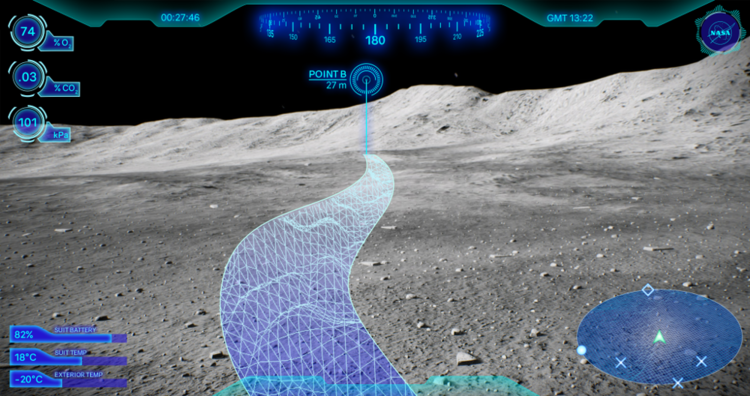

Heads-Up Display

The Shackleton Crater is an ice-bearing crater on the lunar south pole; it is currently one prospective landing location. Due to the low light angle, visibility is difficult, with astronauts facing either blinding sunlight or complete darkness.

To address this, we designed the lunar navigation HUD, pictured below. It featured an overlaid navigation display, with a mini-map and compass. Around the edges, there is relevant suit and exterior metadata, with optional overlays including optimal path, potential dangers, navigational waypoints, and the destination.

We were able to implement a basic version of the HUD in VR and a basic overlay only in AR.

ARVRIS

ARVRIS began as the Multiuser Modal Manipulation Tool in late 2017. The first iteration was completed by spring of 2018, including multi-user functionality, push-button model movement, explosion view, individual component selection, and a basic menu.

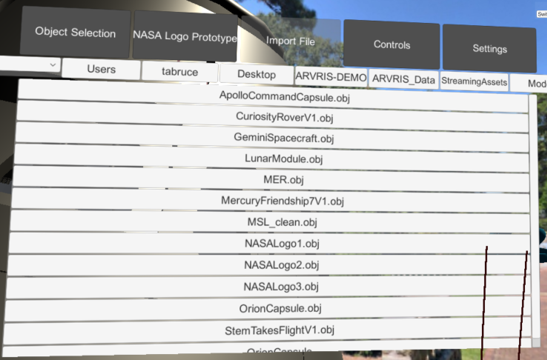

The transition to ARVRIS, or the Augmented Reality and Virtual Reality Integrated Systems (pictured below) occurred in summer 2019 with the addition of the Engineering Design Studio team. It added dynamic model movement for fine detail control, control labels in the virtual environment, improved stability and framerates, multiple model support, and 3D backgrounds to give the user a sense of orientation. Furthermore, we implemented advanced UI and component selection and in-scene control mapping and importation, rather than importing the models from outside the program.

ARVRIS also includes a set of implemented features, some of which were inherited from the Multiuser Modal Manipulation Tool with optimization for the ARVRIS system. These include:

Object manipulation (rotate, scale, move, push, pull)

Scene teleportation (moving around the scene independent from model movement)

Laser pointers (allowing for more precise object indication, especially in a multiuser situation)

Immersive audio (ambient music and audio cues)

Reassemble/Undo (reassembling from the exploded view)

Satellite Orbit

The satellite orbit demonstration addresses a need on behalf of the GPX SmallSat launch team. It features realistic GPS satellite orbits, which are dynamically generated mathematical ellipses. It allows the visualization of showing distance between existing GPS satellites and the proposed GPX satellite, thus improving the ability to understand distance relationships in VR with a dynamic time scale.

5D Scatterplot

This demonstration was intended to show what is possible for data visualization in mixed reality. We used public databases for the proof-of-concept; the scatterplot pictured below uses a well-known database of flowers. We are able to graph in five dimensions - the standard X, Y, and Z axes, as well as the color and diameter of the points themselves.

VR CAD Repository

The VR CAD Repository is an organized database of 3D models sourced from a variety of branches across NASA Langley, including the Science Directorate, the Engineering Directorate, and the Office of the Chief Information Officer. Prior to my work on the repository, no comparable center-wide system existed.

These models are accurate and to-scale, as well as SBU and ITAR compliant. (Although this may include some degree of sanitization, they maintain a high level of accuracy.) In order to maintain high security, it features multiple permission groups, with different user groups allowing different permissions for model access. It is currently accessible by anyone at Langley through a NAMS request for a Windows Drive Share, with plans to transition to SharePoint Online.